A robots.txt file looks small. It may only have a few lines. But one small mistake in this file can create a big SEO problem. That is why the topic generate robots.txt files spellmistake is more important than many beginners think.

Imagine working hard on your website. You write helpful pages. You post good content. You add images, links, and a sitemap. But then one tiny mistake in your robots.txt file tells Google not to crawl your best pages. That can be a serious problem. Your pages may not show in search results the way they should.

In this article, we will talk about generate robots.txt files spellmistake in a very simple way. We will explain what it means, why robots.txt matters, how the file works, what rules you must know, and what common mistakes can hurt your SEO. The goal is simple. By the end, you will understand how to avoid small errors that can cause big search engine problems.

What Does Generate Robots.txt Files Spellmistake Mean?

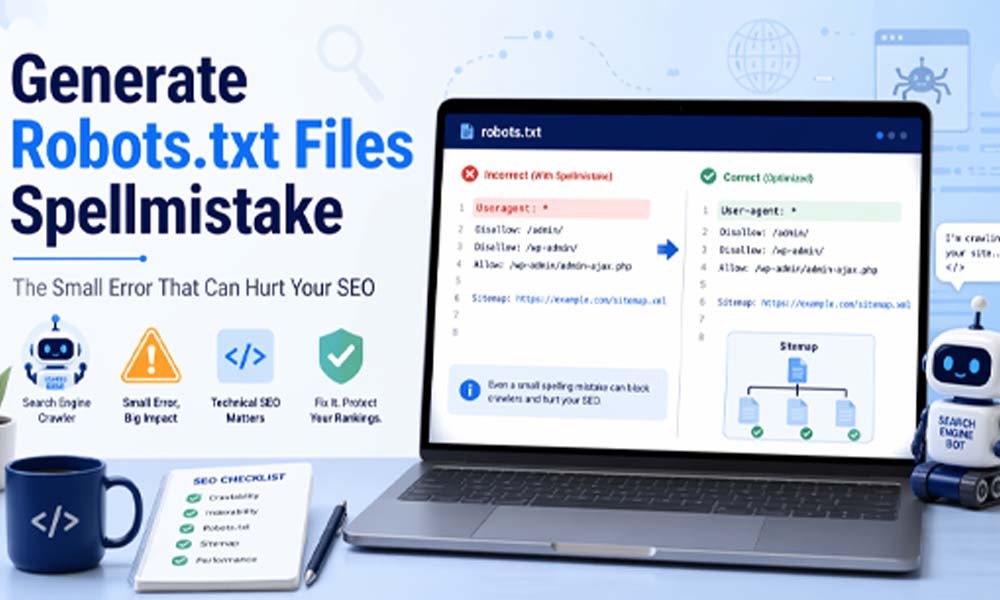

The phrase generate robots.txt files spellmistake may sound a little strange at first. But the meaning is simple. It means spelling mistakes, typing errors, or format problems that happen while creating a robots.txt file. These mistakes can happen when someone writes the file by hand or uses a tool without checking the result.

For example, the correct rule is User-agent. But many beginners may write Useragent, User Agent, or User_Agent. These small changes may look harmless. But search engines need the correct format. If the words are wrong, the rule may not work properly.

This is why generate robots.txt files spellmistake is a useful topic for website owners. Many SEO problems start with small technical errors. A missing dash, a wrong file name, or a bad sitemap link can confuse search engine bots. And when bots are confused, your site may not be crawled the right way.

Why Robots.txt Matters for SEO

A robots.txt file helps search engines understand where they should go on your website. Search engine bots, like Googlebot, visit websites to read pages. This process is called crawling. The robots.txt file gives these bots simple instructions before they start crawling.

Think of your website like a big building. Some rooms are open for guests. Some rooms are private. Robots.txt works like a small sign at the front door. It tells search bots, “You can visit this area, but please do not enter that area.” This helps keep your website clean and organized for search engines.

Robots.txt also helps with crawl budget. Crawl budget means the number of pages a search engine may crawl on your site during a certain time. If bots waste time on useless pages, they may miss your important pages. A clean robots.txt file helps bots focus on your best content. That is why fixing generate robots.txt files spellmistake issues can support better SEO.

How a Robots.txt File Works

A robots.txt file is placed in the root folder of your website. That means it should be found at a simple address like this:

https://example.com/robots.txt

When a search engine bot comes to your site, it usually checks this file first. The bot reads the rules inside the file. Then it decides which pages or folders it can crawl and which ones it should avoid.

For example, if you do not want search engines to crawl your admin area, your robots.txt file may include a rule that blocks /admin/. This does not remove the page from the internet. It only tells search bots not to crawl that area. That is why robots.txt should not be used as a strong security tool. Private pages should be protected with passwords.

This is also where generate robots.txt files spellmistake problems can begin. If the file is in the wrong place, search bots may not find it. If the rules are written badly, bots may not follow them correctly. So the file must be easy, clean, and correct.

Basic Robots.txt Rules You Must Know

A robots.txt file uses a few simple rules. These rules are called directives. The most common ones are User-agent, Disallow, Allow, and Sitemap. Each one has a clear job.

User-agent tells which bot the rule is for. For example, you can write rules for all bots or for a specific bot like Googlebot. Disallow tells bots which page or folder they should not crawl. Allow tells bots which page or folder they can crawl, even if a larger folder is blocked. Sitemap tells bots where your sitemap file is located.

Here is a very simple example:

User-agent: * Disallow: /admin/ Sitemap: https://example.com/sitemap.xml

This tells all bots not to crawl the admin folder. It also shows where the sitemap is. Simple, right? But the format must be correct. If you write Useragent instead of User-agent, it may not work as expected. This is why generate robots.txt files spellmistake can become a real SEO issue.

Common Generate Robots.txt Files Spellmistake Errors

One of the most common mistakes is writing the main rules incorrectly. For example, User-agent is sometimes written without the dash. Some people write Useragent, User_agent, or User Agent. These small changes can break the meaning of the rule.

Another common problem is the wrong file name. The file must be named exactly robots.txt. It should be lowercase. If someone names it Robot.txt, Robots.TXT, or robots.text, search engines may not read it correctly. This is a very simple mistake, but it can create serious SEO trouble.

Formatting mistakes are also common. A missing colon, extra spaces, wrong slashes, or a broken sitemap link can cause problems. For example, a sitemap line should look clean like this:

Sitemap: https://example.com/sitemap.xml

If the sitemap URL is wrong, search engines may take longer to find your important pages. So when people talk about generate robots.txt files spellmistake, they are often talking about these small but risky errors.

Generate Robots.txt Files Spellmistake and Blocked Pages

One of the biggest risks of a robots.txt mistake is blocking the wrong pages. This can happen when a website owner adds a rule without fully understanding it. For example, they may want to block one private folder but accidentally block the whole website.

A dangerous mistake can look like this:

User-agent: * Disallow: /

This rule tells all bots not to crawl the whole website. For a live site, this can be very harmful. Your pages may not be crawled properly. Your new content may not appear in search results. Your traffic may drop, and you may not know why at first.

This is why generate robots.txt files spellmistake should never be ignored. A small rule can have a large effect. If your blog posts, product pages, service pages, or landing pages are blocked, your SEO can suffer badly. Search engines need access to your best pages so they can understand and rank them.

Generate Robots.txt Files Spellmistake and Indexing Problems

Crawling and indexing are not the same thing. Crawling means search bots visit and read your pages. Indexing means search engines store those pages so they can show them in search results. Robots.txt mainly controls crawling. But crawling problems can also lead to indexing problems.

For example, if Google cannot crawl an important page because of a bad robots.txt rule, it may not understand that page well. The page may not rank properly. In some cases, it may not appear in search results at all. This is a big issue for websites that depend on organic traffic.

In 2026, SEO is even more focused on clean website structure. Search engines are smarter, but they still need clear signals. A broken robots.txt file can send the wrong signal. That is why fixing generate robots.txt files spellmistake issues is a simple but powerful step for better SEO health.

How to Check Your Robots.txt File

Checking your robots.txt file is easy. You can open your browser and type your website name followed by /robots.txt. For example:

https://yourwebsite.com/robots.txt

If the file opens, you can read the rules inside it. Look carefully at each line. Check if User-agent, Disallow, Allow, and Sitemap are spelled correctly. Also check if the paths and URLs are correct.

You can also use Google Search Console to check crawling issues. It can help you see if Google is having trouble with your site. This is useful because some robots.txt mistakes are not easy to notice by just looking at the file.

Before making any changes, take a copy of your old robots.txt file. This way, if something goes wrong, you can restore it. This is a smart habit for any website owner. It helps you stay safe while fixing generate robots.txt files spellmistake problems.

Easy Ways to Fix Generate Robots.txt Files Spellmistake Issues

Fixing a robots.txt file is not hard when you know what to look for. The first step is to check the spelling of each rule. Make sure User-agent, Disallow, Allow, and Sitemap are written correctly. Even one missing dash can change how search engines read the file.

Next, check the file name. It should be exactly robots.txt. It should not be Robot.txt, robots.TXT, or robots.text. This may feel like a tiny detail, but search engines look for the correct file name in the correct place. If the name is wrong, bots may not find it.

Also check your sitemap link. The sitemap line should use the full URL. It should look like this:

Sitemap: https://example.com/sitemap.xml

If your website uses HTTPS, your sitemap should also use HTTPS. After fixing any generate robots.txt files spellmistake issue, test the file again. Do not guess. A quick test can save you from a big SEO problem later.

Best Tools to Generate Robots.txt Files Spellmistake-Free

Many website owners use tools to create robots.txt files. This is a good idea, especially for beginners. A generator can help you create a clean file without writing every line by hand. This can lower the chance of spelling mistakes.

SEO plugins like Yoast SEO and Rank Math can help WordPress users manage robots.txt files. These tools make the process easier. They also help you add basic rules without touching your website files directly. This is helpful if you are not a technical person.

You can also use Google Search Console to check crawl issues. Online robots.txt testing tools can also help you find mistakes. But remember one thing. A tool can help, but you should still review the file yourself. The best protection against generate robots.txt files spellmistake problems is a mix of tools and careful checking.

Best Practices for a Clean Robots.txt File

A good robots.txt file should be simple. Do not add too many rules unless you truly need them. The more rules you add, the easier it becomes to make a mistake. A short and clean file is often better than a long and confusing one.

You should never block important files that search engines need to read your page properly. For example, do not block CSS and JavaScript files unless you know exactly why. Search engines use these files to see how your page looks and works.

Also, avoid blocking your main pages. Your home page, blog posts, product pages, service pages, and important landing pages should usually be open to search bots. If you block them by mistake, your SEO can suffer. This is why regular checks are so important in 2026.

Robots.txt and Sitemap: Why They Work Together

Robots.txt and sitemap files are different, but they work well together. Robots.txt tells search engines where they can and cannot go. A sitemap tells search engines which pages are important and should be found.

Adding your sitemap inside robots.txt is a smart step. It gives search engines a clear path to your site’s important pages. A clean sitemap line can help bots discover your content faster.

Here is a simple example:

Sitemap: https://example.com/sitemap.xml

But this line must be correct. If the sitemap URL has a spelling mistake, wrong folder name, or wrong file name, search engines may not find it quickly. This is another reason why generate robots.txt files spellmistake issues should be fixed with care.

Advanced Tips to Protect Your SEO

If your website is small, your robots.txt file may only need a few lines. But if your website is large, robots.txt can help manage crawl budget. For example, you may want to block search pages, filter pages, or duplicate pages that do not help users much.

Still, you must be careful. Blocking the wrong folder can hurt your site. Before you block anything, ask yourself, “Is this page useful for visitors from Google?” If the answer is yes, do not block it without a strong reason.

You can also use meta robots tags with robots.txt. Robots.txt controls crawling. Meta robots tags can help control indexing. Both can work together, but they do different jobs. For better SEO, use them carefully and check them often.

In 2026, clean technical SEO matters a lot. Search engines are better at understanding websites, but they still need clear signals. A clean robots.txt file, a correct sitemap, and a well-organized website can help search engines trust your site more.

Conclusion

A robots.txt file may look like a small part of your website. But it can have a big effect on your SEO. One small spelling mistake can block important pages, confuse search engines, or slow down crawling.

That is why generate robots.txt files spellmistake is not just a small technical topic. It is something every website owner should understand. Whether you run a blog, online store, business site, or news site, your robots.txt file should be clean and correct.

The best way to stay safe is simple. Check your file. Use the right spelling. Keep the rules clear. Add the correct sitemap URL. Test everything before and after changes.

In the end, a clean robots.txt file helps search engines understand your website better. And when search engines understand your site better, your important pages have a better chance to be crawled, indexed, and found by the right readers.

You may also read: Guia Silent Hill Geekzilla: The Easy Guide To The Fog, Fear, And Hidden Secrets